We started building Reactor in 2015. The first release came in 2016. We built it because the tool we needed did not exist, and we think it still fills a gap that a lot of developers do not realize is there until they run into it.

What the options looked like in 2015

Unity developers had two realistic choices at the time.

The first was Photon. Photon was popular for good reason: a developer could sync data between clients with a few API calls and never think about a server. That is genuinely fast to get started with. The problem is that Photon was a relay. It had no context about your game world. It just moved messages between clients. The server did not know what was in the scene, what the rules were, or what state was authoritative. That works for simple games where clients can be trusted and latency is forgiving. It breaks down when you need physics simulation, large numbers of entities, or any situation where client authority is exploitable. Pricing added friction too. You had to estimate your peak concurrency and buy CCUs upfront. Guess wrong and you were either overpaying or scrambling.

The other option was Unity’s own networking solution, or taking the Unreal approach. These were more capable. They were built around real server authority and had the track record to prove it. The cost was significant backend engineering. Deploying and managing dedicated servers requires infrastructure expertise: provisioning, monitoring, security, scaling under load. That is a full discipline on its own. For a team building a game, it is usually a discipline nobody on the team has. Even if they did, it would consume time that could have gone into the game itself.

The gap

We wanted a system that could handle the full range of game types: turn-based, action, physics simulation, MMO, MMOFPS. We wanted developers to never have to touch a server. No DevOps. No backend engineering. Full server authority. That combination did not exist.

Our first attempt was, in hindsight, a useful mistake. We built something closer to Photon but with a richer API. Clients could place objects in a world, describe how they should behave, and the server would simulate and sync them. It made more complex games possible than a plain relay could. But the client-side API kept growing to cover every case we wanted to support. Too many calls from client to server. We had built a more capable relay, not what we were actually after.

Starting over

We scrapped the approach and started from the server side. We had already built a lightweight simulation server using Bullet physics. We expanded it to include a scripting engine, built a server-authoritative controller system, and added a Unity integration layer on top so the Unity workflow could be used for server scripting. Developers write game logic in the Unity environment they already know. The server runs it.

Unity was a natural choice at the time. It was an up-and-coming engine that performed well and was easy to work with. We chose it and we have not had reason to regret it.

This foundation became the basis for Scene Fusion as well, our real-time collaborative scene editing tool for Unity. Scene Fusion and Reactor share the same underlying synchronization system. Building Scene Fusion pushed the backend infrastructure hard in ways a game alone would not have, and several components that ended up in Reactor came directly from that work.

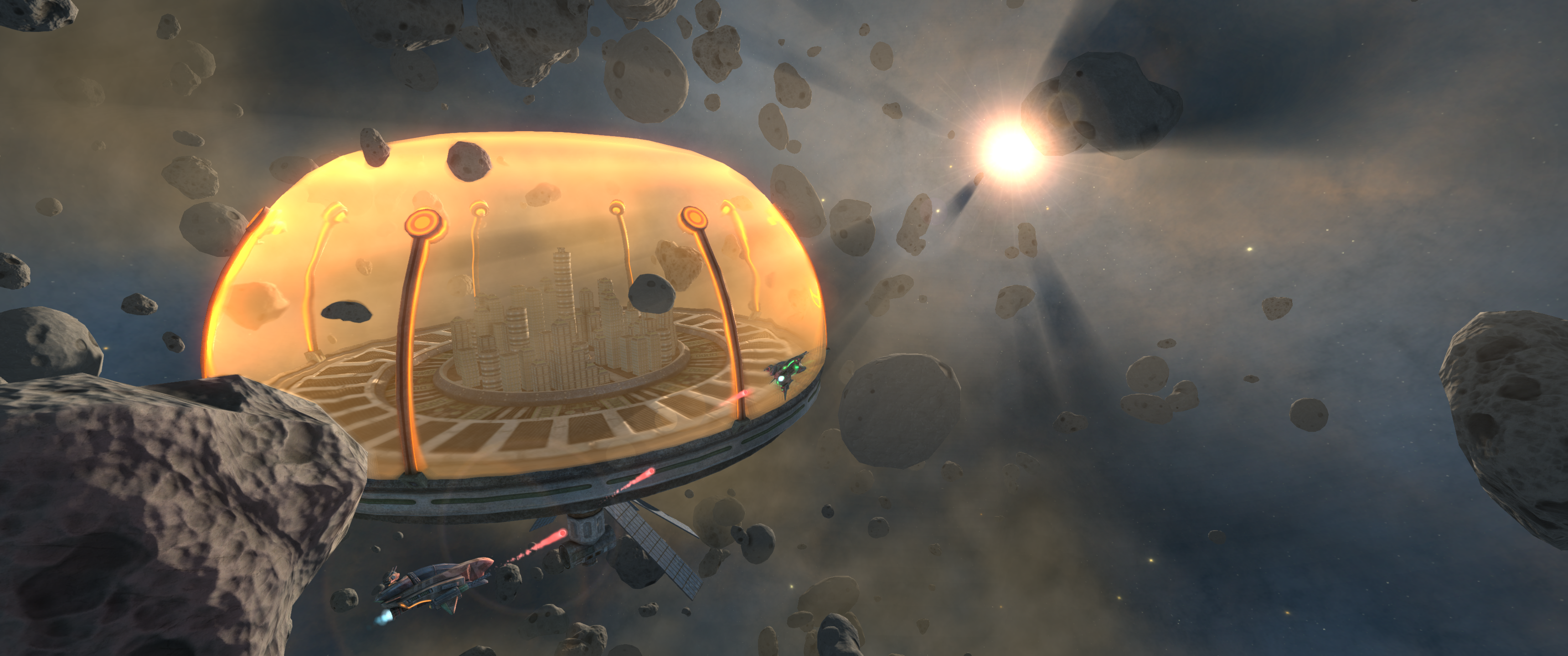

We also built games ourselves and with partners. Repulsor was an early demo from 2016: an asteroid field with hundreds of physics objects, a destructible base, and players fighting each other in the environment. It was a useful stress test for the engine at the time.

Kazap.io was one of the first commercial games we shipped. A later collaboration gave us a direct side-by-side comparison: the same game concept, built twice, once without Reactor and once with it. Same developer, same audience. The results are documented.

Bandwidth

Bandwidth is the constraint that determines what is possible in a multiplayer game. More bandwidth per player means smaller player counts, higher hosting costs, and harder limits on what you can simulate. We focused on reducing it with as little developer input as needed, because better efficiency means bigger games or a lower bill, and ideally both.

Our first implementation was a bit-packing solution built for speed. It evolved into what we have now: complex data models that are automatically generated and synchronized to minimize bytes on the wire. The developer does not configure compression. It happens by default.

We benchmarked it against Photon Fusion 2 and Unity Netcode for GameObjects using a standard scene. Same entities, same update rate, measured directly. Reactor used 9.5 times less bandwidth. The benchmark is available on GitHub if you want to run it yourself.

Where things stand now

The managed hosting question, the one that pushed us to build this in the first place, is handled by Reactor Cloud. Developers deploy to our infrastructure with one click. No servers to provision, no monitoring to set up, no scaling decisions to make. The free tier covers development and small deployments.

On authority, we added shared authority between Unity and the server. The server can assign authority over all or part of an entity to any connected Unity client or headless server. It gives back some of the flexibility of the original client-driven approach without giving up the correctness guarantees of a server-authoritative foundation.

The thing we are most excited about right now is our DOTS plugin, currently in beta. Client-side prediction is still being finished, but the core integration is working. Reactor hooked up to a DOTS-based Unity game is a combination we think will open up game types that have not been practical to build before. The bandwidth advantage we have built over ten years matters most at the scale that DOTS makes possible.

If you are building a multiplayer game in Unity, Reactor is free to get started with.